Fact Finder - History

Television (Electronic)

You probably use a television every day without thinking twice about it. But behind that flat screen sits over a century of fierce rivalries, accidental discoveries, and legal battles that shaped what you're watching right now. A teenager sketched the blueprint. A corporation nearly stole the credit. And a single broadcast reached 650 million people at once. If any of that surprises you, you're only getting started.

Key Takeaways

- Philo Farnsworth sketched the first all-electronic television design at age 15, inspired by farm plowing patterns for horizontal scanning lines.

- The first fully electronic television image ever transmitted was simply a straight black line, sent by Farnsworth on September 7, 1927.

- CRTs fire electron beams at up to 20,000 volts toward phosphor-coated screens, striking millions of precise points per second to form images.

- Although NTSC established 525 scan lines, only 480 were visible; the rest carried blanking intervals and closed-captioning data.

- The Apollo 11 Moon landing was watched by an estimated 650 million viewers worldwide, demonstrating television's unprecedented global reach.

Who Actually Invented the Electronic Television?

When you think of television's invention, names like Marconi or Edison might come to mind — but the true pioneer of the electronic television was a young American inventor named Philo Farnsworth. His teen inventiveness became evident at just 15, when he sketched the world's first all-electronic television design in his high school chemistry class. Inspired by farm plowing patterns, he realized horizontal scanning lines could produce electronic pictures. His determination paid off when the first successful electronic television transmission occurred on September 7, 1927. After years of legal battles with RCA, the U.S. Patent Office ultimately awarded priority of the image dissector to Farnsworth in 1934.

Why Mechanical Scanning Lost to Electronic Television

Farnsworth's victory over RCA wasn't just a legal win — it marked the beginning of the end for an entirely different kind of television technology. Mechanical scanning couldn't overcome its own mechanical inertia, and synchronization challenges between transmitter and receiver disks made stable images nearly impossible.

Here's why mechanical systems failed:

- Images maxed out at 240 lines of resolution, producing fuzzy, dim pictures

- Spinning disks caused excessive flickering and low frame rates

- Bulky, fragile components broke down frequently

- Overheating lights made broadcasting impractical

- Electronic CRTs instantly outperformed every mechanical mechanical limitation

The shift to electronic television introduced electronic scanning technology, which delivered dramatically improved image clarity, stability, and scalability compared to anything mechanical systems could produce. Vladimir Zworykin's invention of the Iconoscope camera tube eliminated the need for mechanical components entirely, improving both the stability and clarity of televised images in ways spinning disks never could.

Philo Farnsworth Transmitted a Dollar Sign at Age 21

On September 7, 1927, a 21-year-old Philo Farnsworth transmitted the world's first fully electronic television image — a simple straight black line — from a camera in one room to a receiver in another at 202 Green Street, San Francisco.

Among his earliest Farnsworth milestones, this breakthrough proved his fully electronic system worked without mechanical disks or mirrors. He subsequently transmitted a triangle and a dollar sign in those early sessions. Much like the Rosetta Stone's three scripts allowed scholars to unlock the mysteries of ancient Egyptian hieroglyphs, Farnsworth's transmissions served as a foundational key to unlocking the future of visual communication.

How a Cathode Ray Tube Creates a TV Picture?

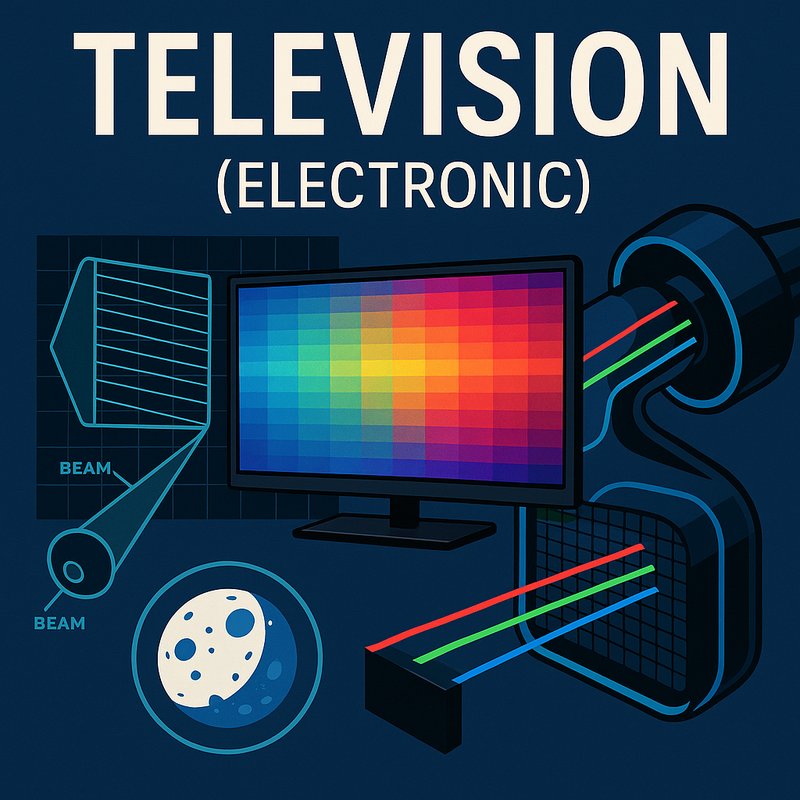

The cathode ray tube, or CRT, works by firing a focused beam of electrons from a heated cathode at one end of a sealed glass vacuum tube toward a phosphor-coated screen at the other.

Electron optics shape and accelerate that beam while electromagnetic coils steer it across the screen in a raster pattern. The interior of the tube is evacuated to less than a millionth of atmospheric pressure to prevent the electron beam from scattering.

Here's what makes the picture appear:

- A control grid adjusts beam intensity for brightness

- Deflection coils bend the beam horizontally and vertically

- Electrons strike phosphor dots, producing visible light

- Phosphor persistence keeps each dot glowing briefly

- Three separate guns handle red, green, and blue

You're effectively watching millions of precisely timed electron strikes per second, combining to create a full-color, continuous-looking image. That beam is accelerated by voltages supplied from a high-voltage flyback transformer, reaching up to around 20,000 volts in color television sets.

Modern tools designed for tank volume calculation can similarly measure the internal capacity of the sealed glass enclosures used in CRT manufacturing, helping engineers ensure dimensional consistency across production runs.

How Television Brought the 1969 Moon Landing to 600 Million Viewers

When Apollo 11's Neil Armstrong set foot on the Moon on July 20, 1969, an estimated 650 million people across multiple continents watched it happen in real time — a feat that wouldn't have been possible without television's rapidly maturing broadcast infrastructure. This moment marked television's greatest achievement in global synchronization, delivering a live signal routing across thousands of miles simultaneously.

In the UK alone, 16 million viewers tuned in as signals intercepted by Cornwall's Goonhilly Earth Station traveled to London's Post Office Tower before reaching homes nationwide. Armstrong's first steps aired at 03:45 BST on July 21.

In the US, between 125 and 150 million viewers watched across competing networks. Television hadn't just covered history — it had delivered history directly to you, wherever you sat. Because Nielsen published only household figures for the event, the full scale of the American audience remains difficult to precisely confirm, yet the Apollo 11 moon landing still stands as one of the most-watched broadcasts ever recorded.

BBC anchors Cliff Michelmore, James Burke, and Patrick Moore guided UK audiences through the event, while the corporation's coverage stretched to an impressive 27 hours of broadcasting across the mission's duration. Just as multi-spectral camera technology later revealed hidden layers beneath the Mona Lisa, advances in broadcast technology during this era were uncovering new possibilities for what television could achieve on a global scale.

The Color TV Battle RCA Won in 1953

Before RCA's all-electronic color system became the American standard, CBS nearly locked the country into a format that couldn't talk to the millions of black-and-white sets already sitting in living rooms.

The CBS controversy unfolded fast. RCA's comeback relied on courts, consumers, and timing:

- FCC approved CBS's incompatible color system on October 11, 1950

- RCA sued immediately, but the Supreme Court upheld CBS on May 28, 1951

- RCA increased its TV market share by 50 percent during the legal delay

- CBS rescinded its own standard in March 1953

- FCC adopted RCA's NTSC system on December 17, 1953

The first compatible color sets hit shelves December 30, 1953, with 15-inch screens. The CBS color wheel system required a bulky mechanical converter attached to sets, making it impractical for the average consumer.

CBS formally launched its color broadcasts in June 1951, but the turn-on resulted in viewership plummeting to near zero, and after just four months of sinking ratings, CBS ceased its colorcasting efforts entirely.

Why Early TVs Only Had 200 Lines of Resolution?

If you've ever examined an early television test pattern, you might've noticed it only tested up to 200 lines of resolution — far fewer than the 525 scan lines the NTSC standard actually established in 1940.

CRT limitations played a significant role here. Electron guns scanning phosphor screens line-by-line couldn't reliably reproduce fine detail, making 200 TV Lines the practical ceiling for measuring horizontal resolution and detecting defects like ringing and linearity errors.

Analog bandwidth imposed additional constraints, since broadcast signals transmitted as radio waves carried limited detail capacity.

The EIA 1956 resolution chart reflected these real-world boundaries rather than theoretical maximums. So while 525 lines defined the standard, what your screen could actually display and what broadcast signals could deliver were entirely different things. Of those 525 lines, only 480 were visible, as the remaining lines were consumed by the vertical blanking interval and closed-captioning data.

The NTSC's choice of 525 scan lines was itself a product of its time, selected specifically due to vacuum-tube technology limitations that constrained what broadcast and receiver hardware could reliably process in 1940.

How TV Screens Went From Liquid Crystals to OLED

While early television struggled to push past 200 lines of resolution due to CRT and broadcast limitations, the screens themselves would eventually undergo a far more radical transformation — from bulky cathode-ray tubes to the razor-thin panels you're watching today.

Liquid crystal evolution moved fast once manufacturers solved early performance issues:

- Friedrich Reinitzer discovered liquid crystals in 1888

- Twisted nematic LCD patents emerged in 1970

- Sharp mass-produced LCD watches by 1975

- Matsushita received Corning's fusion-formed glass LCD in 1986

- LCD surpassed plasma displays by 2007

OLED commercialization pushed things further. Kodak's 1987 OLED invention introduced flexible, substrate-free panels delivering deeper blacks than any LCD could match.

The cathode ray tube was first invented in 1897 and went on to dominate display technology throughout the 20th century, despite being inefficient, bulky, heavy, and constructed with hazardous materials. Early color television also benefited from the first commercial color CRT, which was produced in 1954 and remained the dominant display technology for televisions and monitors for over half a century.

Why Your TV Redraws the Picture 60 Times Every Second

Every time you sit down to watch television, your screen quietly pulls off a technical feat you probably never notice: redrawing the entire picture 60 times every second. This refresh rate, measured in hertz, is your display's hardware capability — separate from whatever frame rate your content runs at.

At 60 Hz, your TV keeps pace with fast-moving scenes, reducing blur and minimizing eye strain during long viewing sessions. It also matches the 24–60 fps range most everyday content uses, making it perfectly sufficient for typical watching.

Problems arise when refresh and frame rates don't sync. A 24 fps film on a 60 Hz screen needs frame interpolation, triggering motion smoothing that can make cinematic movies look oddly like live video — the infamous soap opera effect. Fortunately, motion smoothing can be turned off or reduced through your TV's picture settings, often under labels that vary by brand and model.

For gaming, stepping up to 120 Hz becomes genuinely worthwhile, particularly for console gamers using a PS5 or Xbox Series X, where Variable Refresh Rate technology can synchronise your TV's refresh rate to your game's frame rate, eliminating screen tearing and stutter entirely.