Fact Finder - Science and Nature

Entropy and the Second Law of Thermodynamics

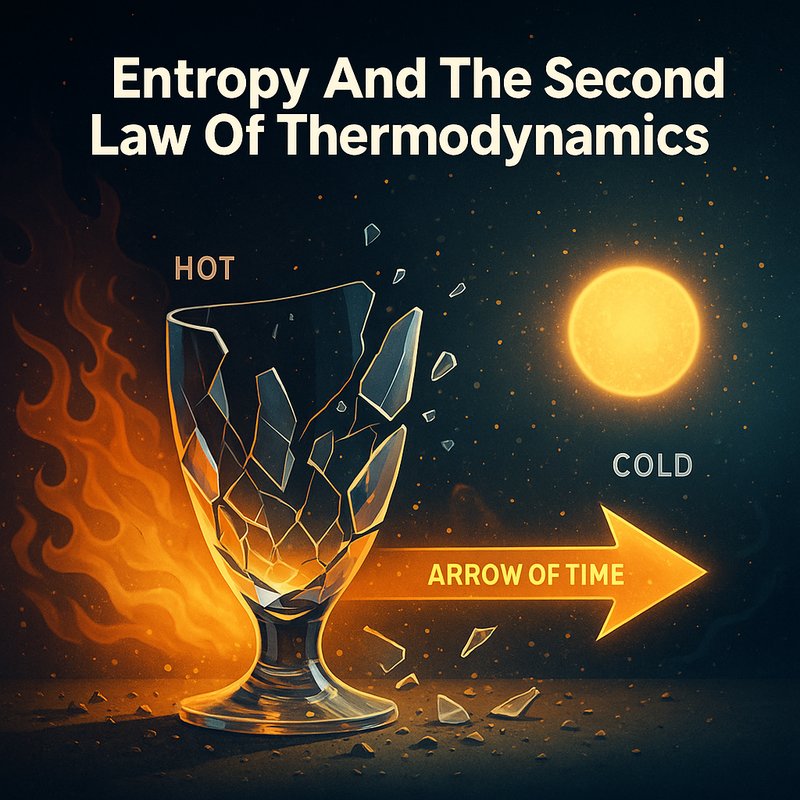

Entropy is something you encounter every single day, even if you don't realize it. It measures how thermal energy spreads and becomes unavailable for useful work. The Second Law of Thermodynamics tells you that entropy always increases in any spontaneous process, making it the reason time moves forward. It's why your coffee cools, rooms get messy, and perpetual motion machines are impossible. Stick around, and you'll uncover just how far entropy's reach truly extends.

Key Takeaways

- Entropy measures thermal energy unavailable for useful work, reflecting how dispersed and disorganized energy becomes within a system.

- The Second Law states that entropy increases drive all spontaneous events, linking irreversible processes to the forward direction of time.

- Entropy change is calculated as ΔS = Q/T, where Q represents heat transferred and T represents absolute temperature.

- Living organisms maintain internal order by actively exporting disorder outward, temporarily decreasing local entropy without violating the Second Law.

- The universe's ultimate fate, "heat death," occurs when all black holes evaporate, leaving energy dispersed uniformly across a maximum-entropy equilibrium.

What Entropy Actually Is and Why Physics Depends on It

Entropy sits at the heart of thermodynamics, measuring the thermal energy within a system that's unavailable for doing useful work. It's a thermodynamic state variable, meaning it depends entirely on a system's current state, not how it got there. Rudolf Clausius introduced this concept in 1850, giving physics a precise tool for understanding molecular behavior.

The relationship between entropy and disorder is fundamental—high entropy means energy is dispersed and disorganized, while low entropy means it's concentrated and ordered. You can also connect entropy and information theory through Boltzmann's equation, S = k ln Ω, which counts the microscopic arrangements producing a given macroscopic state. The more arrangements possible, the greater the disorder, and the higher the entropy. Albert Einstein himself regarded entropy and the second law of thermodynamics as foundational insights that would never be overthrown.

Entropy is not confined to physics and thermodynamics alone—it has found far-ranging applications across disciplines including chemistry, biology, cosmology, economics, and information theory and telecommunication.

What the Second Law Says About Entropy's Direction

Building on what entropy is, the Second Law of thermodynamics tells you which direction natural processes actually run. It's a one-way rule: entropy increase drives every spontaneous event forward, never backward. You'll never see a broken cup reassemble itself or heat flow from cold to hot on its own. These events aren't just unlikely — they're forbidden by the Second Law.

Natural process irreversibility means that without adding energy from outside, you can't undo what's happened without raising entropy elsewhere. Every real process generates entropy, and that's what links thermodynamics to time's arrow. The universe constantly moves toward greater disorder, and less energy becomes available for useful work as it does. That direction isn't a coincidence — it's physics enforcing a fundamental rule on everything around you. The change in entropy can be precisely calculated, as ΔS equals Q divided by T, where Q is the heat transferred and T is the absolute temperature in Kelvins.

The Second Law wasn't derived from theory first — it was an empirical finding that scientists observed in nature before the underlying mathematics and statistical mechanics were developed to explain it.

Why Heat and Temperature Trace Back to Entropy

Temperature also controls how quickly entropy rises as you heat a substance. At constant pressure, the constant pressure heat capacity governs that rate — specifically, dS = Cp/T dT.

Integrate that over a temperature range, and you've mapped exactly how much disorder accumulates. Since heat capacity stays positive, entropy always climbs with rising temperature.

Heat and temperature aren't separate concepts from entropy; they're the very mechanisms driving it forward. The third law of thermodynamics places entropy on an absolute scale, giving these measurements a definitive reference point.

Why Entropy Is the Reason Time Has a Direction

When you drop cream into coffee, it swirls and disperses — it never spontaneously reassembles. That irreversibility defines the thermodynamic time arrow: time flows from low-entropy past toward high-entropy future.

The cosmic entropy puzzle asks why the universe started ordered enough to allow this progression. The Big Bang provided that low-entropy origin, anchoring all subsequent disorder.

Three key ideas explain entropy's role in time's direction:

- Irreversibility — scrambled eggs, expanding gas, and aging never reverse naturally

- Microstates — far more arrangements produce disorder than order

- Low-entropy origin — the Big Bang's initial order enables the ongoing entropy increase

Without that early ordered state, you'd have no past, no future, and no arrow pointing between them. Some researchers now propose that gravity drives order, naturally producing a structured universe that then branches into two opposing temporal directions from a single point in time. Einstein himself was struck by how thermodynamics' simplicity and power offered universal principles that applied across every scale of physical reality, from the smallest molecules to the cosmos itself.

Everyday Examples of Entropy You've Already Seen

Entropy isn't just an abstract physics concept — it's already playing out in your daily life. Every time your room goes from tidy to chaotic, you're watching increasing entropy in action. Restoring order demands real effort and energy.

Melting ice, cooling coffee, and spreading perfume all follow the same pattern — systems naturally shift toward disordered states without any push. Break an egg, and you can't undo it. Perfume molecules disperse through a room and won't spontaneously recollect. Heat from your coffee spreads into cooler air until temperatures equalize.

These disordered systems aren't malfunctioning — they're behaving exactly as thermodynamics predicts. The universe consistently favors disorder over order, and your everyday surroundings are constant, visible proof of that tendency. This is also why perpetual motion machines are impossible, as entropy ensures no system can operate indefinitely without an energy input.

Rudolf Clausius first identified this tendency toward disorder when he observed that heat flows naturally from hot to cold bodies, never the reverse, a foundational insight that shaped our understanding of entropy.

Can Entropy Ever Decrease?

- Ice formation — Below freezing, molecules aggregate into ordered solids, reducing molecular freedom.

- Heat transfer — A hot object losing heat experiences an entropy drop, offset by the cold object's larger gain.

- Emergent entropy reduction — Complex structures like living organisms maintain order by exporting disorder outward.

The statistical perspective reinforces this: small decreases are probable, large ones nearly impossible. Total universal entropy always rises, even when local pockets temporarily decrease. Evolution by natural selection happens a few molecules at a time, meaning entropy decreases at this scale are entirely consistent with the Second Law. In fact, decreasing a system's entropy is only achievable through heat transfer to the surrounding environment, as irreversible processes strictly generate additional entropy rather than remove it.

How Living Things Beat Entropy Without Violating Physics

Living things seem to defy entropy at first glance, but they're actually exploiting a pivotal loophole: the second law only demands that total entropy increases, not that every local pocket must. Your cells continuously import high-energy molecules, use them to drive protein folding, compartmentalization, and ATP-powered reactions, then export disorder outward through waste heat dissipation.

Cell membrane gradients—electrical, chemical, and pressure-based—actively pump entropy out while maintaining internal order. Compartmentalization alone reduces cellular entropy by measurable amounts, as seen in yeast and algae. Self-reproduction reinforces this further, selecting organisms that sustain negative entropy through ideal genome arrangements.

You're not watching physics get broken—you're watching it get strategically redirected, keeping internal systems ordered while the surrounding environment absorbs the thermodynamic cost. This spatially differential entropy reduction is driven by Turing instability, using cAMP and ATP as morphogens to coordinate biochemical self-organisation across space and time. Organisms further extend this ordered state over evolutionary time through gene duplication and evolution, which introduces entirely new biological functions and expands the range of available material and energy sources.

How Entropy Connects Black Holes, Heat Death, and the Universe's End

Black holes sit at the intersection of three of physics' most profound ideas—entropy, information, and the fate of the universe. Through Hawking radiation, black holes slowly evaporate, raising universal entropy toward maximum. Black hole information loss and quantum gravity and holography shape how physicists understand this process.

Entropy scales with horizon area, not volume, supporting the holographic principle and suggesting information bounds cosmic evolution.

Hawking radiation increases external entropy, compensating mass loss while driving the universe toward thermodynamic equilibrium.

Heat death emerges when all black holes evaporate, dispersing energy evenly across an equilibrium state. The Generalized Second Law combines black hole entropy and matter entropy to ensure total entropy never decreases throughout this process.

You're witnessing the second law operating at its grandest scale—irreversibly steering everything toward the universe's final, uniform end. In 1974, Stephen Hawking confirmed that black hole entropy is proportional to the area of the event horizon, fixing the constant of proportionality at one-quarter.