Fact Finder - Technology and Inventions

John Von Neumann and Computer Architecture

John von Neumann earned his doctorate by age 20 and went on to reshape computing forever. You can trace nearly every modern computer back to his stored-program concept, which let instructions and data share the same memory. He also contributed to the Manhattan Project, laid early groundwork for neural networks, and helped establish computing as a formal science. There's far more to his story than most people realize.

Key Takeaways

- Von Neumann earned his doctorate in set theory and analysis by age 20, demonstrating the exceptional mathematical genius behind his computing innovations.

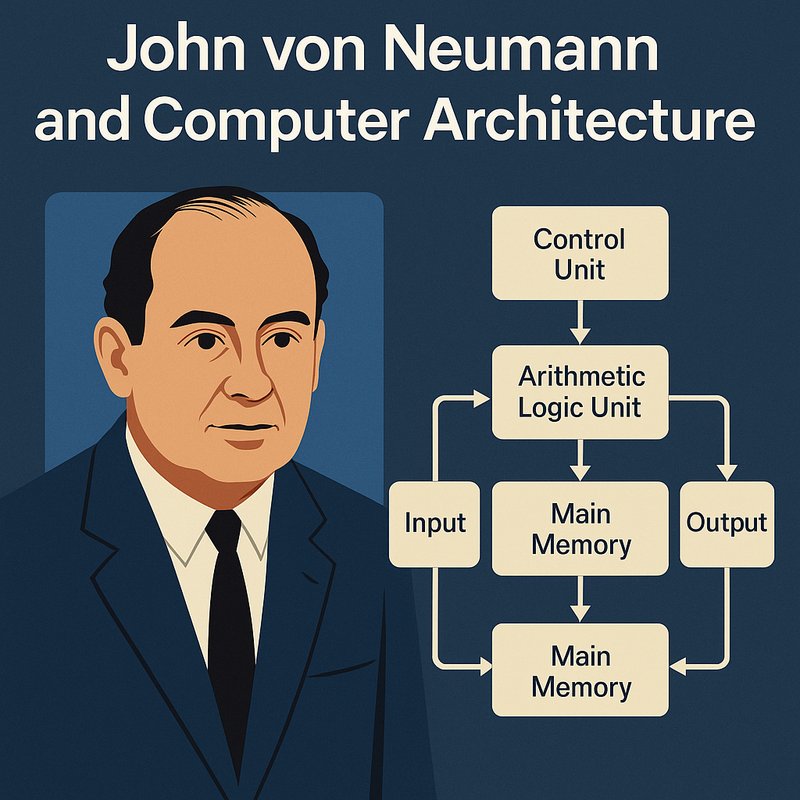

- The Von Neumann architecture organizes computers around five core elements: a Control Unit, ALU, main memory, registers, and input/output devices.

- His stored-program concept eliminated physical rewiring by allowing instructions and data to share the same memory, enabling modern software development.

- Von Neumann's Manhattan Project work revealed the need for advanced computational tools, directly accelerating the development of electronic stored-program computing.

- Before Von Neumann, computing lacked scientific identity; he grounded the field in mathematical logic and formal proof systems.

The Mathematician Who Rewired How Computers Think

John Von Neumann wasn't just a mathematician—he was the architect of how modern computers think. Von Neumann's mathematical genius transformed computing from a mechanical process into something truly programmable. You can trace nearly every modern computer back to his 1945 architecture, which stored both data and instructions in the same memory. That single insight made code portable, reusable, and hardware-independent.

As computing's early visionary, he didn't stop at architecture. He developed the Monte Carlo method, modeled atomic bomb behavior through simulations, and laid the groundwork for neural networks and artificial intelligence. His work connected hardware, software, and mathematics under one shared framework. Without his contributions, the computers you use daily wouldn't exist in their current form—and neither would modern programming itself. His minimax theorem in game theory provided a powerful mathematical framework for decision making under uncertainty, influencing fields far beyond computing.

Born in 1903 in Budapest, Hungary, Von Neumann demonstrated extraordinary intellectual promise from a young age, earning his doctorate in set theory and analysis by the time he was just twenty years old. His early mastery of rigorous proof techniques and analytic approaches gave him the mathematical foundation that would later revolutionize computer science and numerous other disciplines.

The Von Neumann Stored-Program Concept That Changed Computing

Before Von Neumann's influence took hold, computers like ENIAC and Colossus were single-purpose machines—you had to physically rewire them to change what they did. The stored program concept eliminated that limitation by placing instructions and data in the same memory, ending program memory separation as a design constraint.

Von Neumann's 1945 EDVAC report described this architecture in plain terms, outlining how a machine could load new instructions without hardware changes. That meant one computer could handle payroll, then ballistics calculations, simply by swapping programs. It also enabled self-modifying code, compilers, and operating systems—tools that treat instructions as editable data.

Despite stored program concept limitations like vulnerability to accidental instruction overwrites, the design became foundational. Manchester Baby proved it operational in June 1948. Alan Turing's 1936 paper on the universal computing machine laid important theoretical groundwork that preceded and informed the development of stored-program computer design. Von Neumann himself was not a sole inventor but rather an advisor and communicator whose clear, organized descriptions helped spread the stored-program concept across the broader computing field.

How Does Von Neumann Architecture Actually Work?

At its core, Von Neumann architecture organizes a computer around five elements: a Control Unit, an Arithmetic Logic Unit (ALU), main memory, registers, and input/output devices. These components communicate through a shared bus system carrying data, addresses, and control signals.

Here's how it works: the Program Counter holds the next instruction's address, the Control Unit fetches it into the Instruction Register, and the ALU executes it. This iterative execution logic repeats continuously, updating the Program Counter after each step. The Memory Data Register temporarily holds data that is being read from or written to main memory during this process.

However, since instructions and data share the same memory and pathways, the system creates a bottleneck. This design enforces strict sequential processing, exposing Von Neumann's parallel processing limitations. Modern computers address this constraint using split caches, but the foundational architecture remains largely unchanged from its 1945 origins. The Harvard architecture resolves this issue by utilizing separate buses for instructions and data, enabling both to be fetched simultaneously.

How the Manhattan Project Pushed Von Neumann to Invent Modern Computing

When J. Robert Oppenheimer invited Von Neumann to join the Manhattan Project in late 1943, he unknowingly set computing history in motion. Von Neumann tackled the explosive physics behind Fat Man's plutonium implosion design, where implosion symmetry considerations demanded extraordinary precision.

Plutonium's volatile nature required a perfectly uniform inward force, and Von Neumann adapted James Tuck's explosive lens concept to focus shock waves symmetrically onto the core.

Solving these problems exposed a critical gap — hand calculations simply couldn't handle complex hydrodynamic simulations fast enough. The need for advanced computational tools became undeniable, pushing Von Neumann toward specialized IBM mechanical tabulators.

That experience revealed computers as powerful applied mathematics engines, not just calculators. Los Alamos ultimately accelerated his post-war pursuit of electronic stored-program computing, reshaping the technological world you live in today. His post-war architectural work was so foundational that he ensured open public access to future advances built upon the IAS computer's design. Before his wartime work, Von Neumann had already established himself as a prodigy, becoming the youngest Privatdozent at Friedrich-Wilhelms-Universität Berlin in 1928.

What Von Neumann Actually Built With ENIAC and EDVAC

Los Alamos gave Von Neumann more than a nuclear weapons problem — it gave him a reason to chase computing power. He arrived at the Moore School in September 1944, after ENIAC's design was already finished. His biggest contribution wasn't building it — it was recognizing that ENIAC's parallel processing could tackle Manhattan Project calculations nobody else could handle.

When he joined Mauchly and Eckert's team by November 1944, the focus shifted to EDVAC. You'd notice the key difference immediately: EDVAC's serial calculation replaced ENIAC's parallel approach, and stored programs eliminated the exhausting process of re-inputting instructions. EDVAC also used two identical arithmetic units to cross-check results. The conceptual framework was solid by 1946, though the machine itself wasn't completed until 1952. His analysis of EDVAC's logical design became a 101-page report recommending a central control unit, processor, and random-access memory.

Von Neumann reportedly wrote the First Draft report entirely by hand on a train commuting to Los Alamos, with Goldstine later arranging for it to be typed and distributed. Copies were circulated on June 25, 1946, and the report's influence spread quickly — Maurice Wilkes of Cambridge University credited it as the direct reason he traveled to the United States to learn more.

Why Does the Von Neumann Architecture Bottleneck Still Matter Today?

Why does a design from the 1940s still throttle the world's most advanced AI systems? The answer lies in processing speed versus data transfer — CPUs have grown exponentially faster, but the single shared bus connecting memory to processors hasn't kept pace. Every generation widens that gap.

For AI, the consequences are severe. Your energy expenditure on data transfers dominates AI workloads, accounting for roughly 90% of total energy use. Moving billions of model weights between DRAM and GPUs repeatedly costs far more than the actual computations.

Training large language models takes months and consumes household-level energy because of this inefficiency.

Caches and parallel architectures soften the blow, but they don't eliminate it. The bottleneck persists because the fundamental architecture remains unchanged — and modern AI makes you feel every bit of it. Emerging solutions like analog in-memory computing store model weights directly within memory devices, eliminating the need to shuttle data to a separate processor entirely.

The industry has responded by turning to hardware alternatives, with FPGAs playing a critical role in evolving future computing platforms, already powering Microsoft's Bing search engine and driving Azure cloud computing services.

Von Neumann's Overlooked Role in AI and Neural Networks

Most people credit Von Neumann solely for the stored-program architecture, but his fingerprints are all over the foundational concepts driving modern AI. His cellular automata influence stretched far beyond grid-based simulations, directly shaping how researchers approached machine cognition.

Consider three overlooked contributions:

- Self-replicating machines — His universal constructor theory inspired evolutionary algorithms and artificial life research.

- Neural network foundations — His 1945 EDVAC report influenced McCulloch-Pitts' artificial neurons, linking computational complexity to biological processing.

- Learning machines — He proposed self-modifying code enabling adaptive behavior, predating modern machine learning by decades.

You're fundamentally looking at someone who envisioned AI's core mechanics before the field existed. His work on self-replicating machines alone reshaped how scientists model reproduction, evolution, and intelligent adaptation computationally. Von Neumann is also regarded as a founding father of artificial life, having bridged the gap between biological systems and computational theory in ways that continue to influence research today. Beyond computing, Von Neumann made significant contributions across disciplines, including substantial work in set theory, game theory, and functional analysis.

How Did Von Neumann Turn Computing Into a Real Science?

Before Von Neumann, computing was a collection of engineering tricks without a unifying scientific identity. He changed that by grounding the field in mathematical logic and formal proof systems, publishing a landmark 1927 paper on mathematical consistency that reshaped how theorists thought about computation's limits.

He didn't just theorize—he applied logical structures to hardware design, clarifying how formal languages could describe program behavior accurately. That precision gave computing a common language and shared building blocks.

You can trace modern computing's scientific credibility directly to his insistence on precision and rigor. Turing's theoretical results and von Neumann's practical approach worked as complementary forces, together establishing the mathematical and architectural foundations that defined computing as a legitimate science.

Von Neumann also pioneered automata theory and cellular automata, extending computing's scientific reach beyond hardware into the study of self-replicating systems and the theoretical behavior of machines.

The Von Neumann Architecture Myths That Still Confuse Engineers

Few myths in computing history have proven as stubborn as those surrounding Von Neumann architecture—and they're still tripping up engineers today. EDVAC report authorship confusion started it all—Herman Goldstine listed only von Neumann, erasing the Moore School team's contributions entirely. That single decision shaped decades of misattribution.

Meanwhile, hardware evolution towards von Neumann style happened gradually, not through one genius stroke.

Here's what you need to unlearn:

- Von Neumann didn't solely invent stored-program computing—John Mauchly publicly disputed that claim.

- Harvard architecture wasn't superior technology unfairly buried—Aiken's Mark I used paper tape, hardly cutting-edge.

- Security vulnerabilities aren't uniquely von Neumann's fault—unified memory won because it scaled.

Recognizing these myths sharpens your understanding of how computing architecture actually developed. The same pattern of misattribution extends beyond hardware—recent scholarship reveals von Neumann's views on quantum measurement were misrepresented in subsequent literature, with researchers demonstrating he never actually proposed that consciousness causes quantum collapse. Beyond technical misattributions, the broader culture of mythologizing his abilities has led to uncritical acceptance of legends about von Neumann memorizing entire books verbatim, claims that remain difficult to verify and easy to exaggerate.