Fact Finder - Technology and Inventions

Uber and the Routing Engine (Michelangelo)

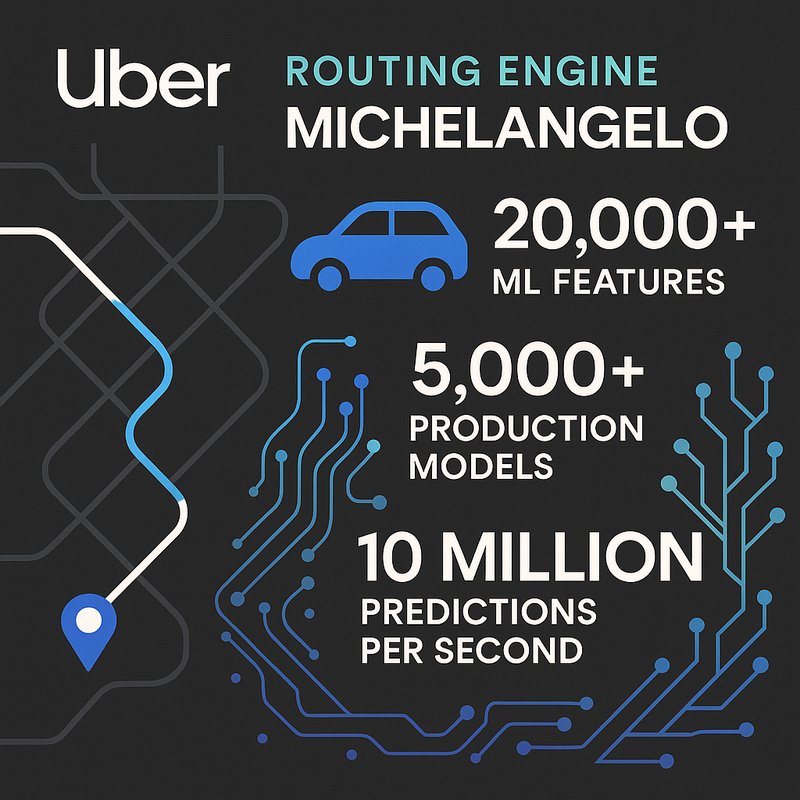

Every time you request an Uber ride, Michelangelo — Uber's internal machine learning platform — is quietly running thousands of predictions to estimate your arrival time. It manages over 20,000 ready-to-use features and keeps 5,000+ production models running smoothly. It even catches model failures before they reach you. Michelangelo handles more than 10 million predictions per second across 400+ use cases. Stick around, and you'll uncover just how deep this system goes.

Key Takeaways

- Michelangelo is Uber's centralized ML platform managing the full lifecycle of models, powering critical services like Rides ETA and Eats ETD.

- The platform manages 5,000+ production models and handles 400+ use cases without operational chaos.

- Michelangelo's Feature Store (Palette) maintains 20,000+ ready-to-use features, reducing duplication and accelerating ML deployment across teams.

- Ray-based training replaced Spark, adding fault tolerance and enabling automatic model generation per data segment.

- Michelangelo inspired Tecton, a leading commercial feature store platform, highlighting its industry-wide influence beyond Uber.

What Is Michelangelo and Why Did Uber Build It?

Uber's Michelangelo is a centralized machine learning platform that manages the entire ML lifecycle — from data preparation and model training to deployment, prediction, and monitoring. It supports traditional ML, time series forecasting, and deep learning, powering critical use cases like Rides ETA and Eats ETD.

Uber built Michelangelo to solve real operational challenges. Managing billions of data points from 15 million daily rides demanded scalable deployment and centralized governance across engineering teams. Without a unified system, ML workflows were inconsistent and difficult to scale.

Introduced in early 2016, Michelangelo standardized how developers build and deploy models across the organization. It's since evolved to support generative AI and LLMOps capabilities. Today, it handles 20,000 monthly training jobs and serves 10 million real-time predictions per second at peak. Currently, 5,000+ models are actively serving in production at any given time.

Before Michelangelo existed, Uber faced significant issues with data quality, latency, efficiency, and reliability that made scaling machine learning operations across the organization extremely difficult.

Michelangelo's Feature Store: 20,000 ML Features, Ready to Use

At the heart of Michelangelo's data infrastructure sits Palette, Uber's internal feature store built to manage and share feature pipelines across teams. It supports batch and near-real-time computation, giving you over 20,000 ready-to-use features covering cities, drivers, riders, and specialized domains like Eats and Fraud.

Palette simplifies feature engineering workflows by auto-generating pipelines and updating features daily, so you're not rebuilding what already exists. It powers hundreds of ML scenarios across Uber, supporting roughly 400 active projects and over 20,000 monthly training jobs.

Its data governance strategies rely on formal schemas, metadata consolidation, and validation to keep features reliable and shareable. Backed by Hive, Cassandra, Kafka, and Spark, Palette reduces duplication, accelerates production deployment, and democratizes ML by giving every team a strong foundation to build on. To ensure models remain accurate over time, Palette incorporates centralized monitoring and observability of both system and model-level metrics, enabling teams to detect feature drift and prediction drift before they impact production. Tecton, one of the most complete feature store platforms in the current market, was spun out of the Michelangelo team, carrying forward the same architectural principles that made Palette so effective at scale.

Training and Shipping 5,000 Models Without Chaos

Running thousands of ML models in production sounds chaotic, but Michelangelo 2.0 makes it manageable by rebuilding the training stack from the ground up. It replaces Spark with Ray-based trainers, applies data partitioning strategies to auto-generate models per segment, and uses active model management to prune underperformers automatically.

Here's what keeps 5,000 production models running without chaos:

- Ray-based training replaces Spark, adding fault tolerance and GPU support

- RayTune handles hyperparameter search across all model types

- Data partitioning strategies automatically train global, country, and city-level models

- Active model management falls back to parent models when partitions underperform

- Neuropod engine handles low-latency deep learning serving for tier-1 projects

The result: 10 million real-time predictions per second at peak, cleanly managed.

How Michelangelo Supports Uber's DeepETA Model in Production

When a rider requests a trip, the entire ETA prediction flow runs through a tightly integrated chain: uRoute acts as the frontend for all routing lookups, pulling route lines and model features from the routing engine, then invoking DeepETA via Michelangelo's Online Prediction Service.

Neuropod handles low-latency serving across TensorFlow and PyTorch, supporting model scaling strategies for a network exceeding 100 million parameters trained on one billion trips.

You'll also notice strong devops practices for production ML throughout the system — automated retraining workflows, DAG-based pipelines with checkpoint resumption, and version-controlled model configurations keep deployments consistent.

Canvas manages the full ML lifecycle, while built-in observability tools monitor feature consistency, evaluation scores, and explainability outputs, accelerating recovery whenever pipeline failures occur in production.

How Michelangelo Serves 10 Million Predictions Per Second

- Triton inference server handles GPU-optimized model execution

- Kubernetes clusters provide portable, federated job management

- Elastic resource sharing dynamically allocates CPU and GPU capacity

- Cassandra delivers fast feature retrieval at scale

- Separated control and data planes prevent bottlenecks across offline and online processing

Michelangelo is built on open source systems like HDFS, Spark, Samza, Cassandra, MLLib, XGBoost, and TensorFlow to power its scalable infrastructure. The platform manages 5,000+ production models, demonstrating the scalability achieved through careful architectural design.

Why Most ML Models Fail Quietly: and How Michelangelo Catches It

Most software fails loudly — errors surface, exceptions throw, tests catch regressions before they reach production. ML models don't work that way. They degrade silently, serving slightly worse predictions until small quality drops ripple across millions of users.

Michelangelo tackles this through layered defenses. Data quality metrics start at ingestion — schema validation catches type mismatches, distribution shifts, and null rate inconsistencies before they contaminate downstream features. Identical imputation logic between training and serving eliminates hidden prediction failures caused by subtle pipeline mismatches.

Model reproducibility challenges get addressed through continuous monitoring that tracks score distributions, calibration, and feature drift using statistical tests rather than manual inspection. When regressions appear, you're detecting them in hours, not days. Automated scoring can block risky deployments entirely before they ever reach production. The scale of this problem is significant — data scientists waste more than 50% of their time tracking down data errors through manual processes that Michelangelo was designed to systematically eliminate.

At the infrastructure level, Michelangelo supports over 400 use cases across Uber's business, meaning a single flaw in shared pipeline logic or monitoring thresholds can silently degrade predictions across a vast range of products simultaneously.